Read Your Movies as Automatically Generated Comic Books

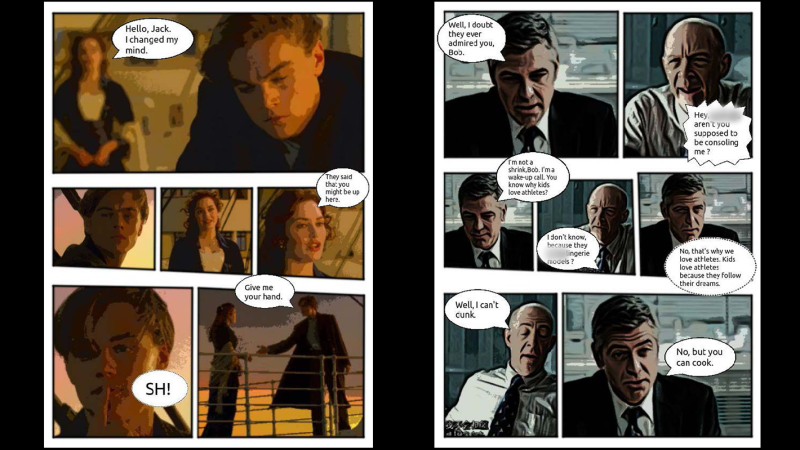

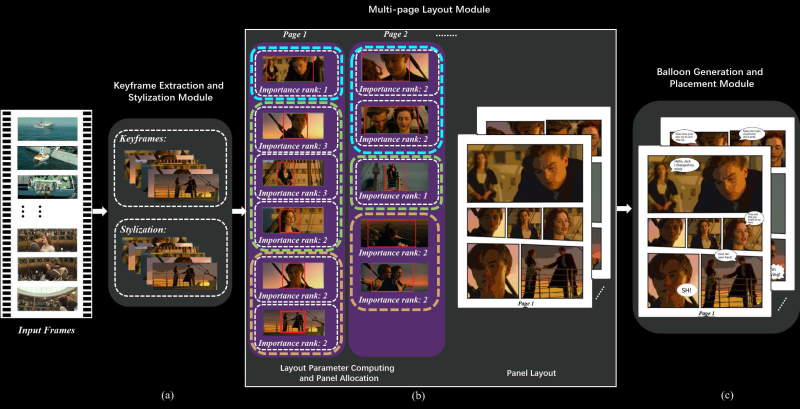

A research paper from Dalian University of Technology in China and City University of Hong Kong (direct PDF link) outlines a system that automatically generates comic books from videos. But how can an algorithm boil down video scenes to appropriately reflect the gravity of the scene in a still image? This impressive feat is accomplished by saving two still images per second, then segments the frames into scenes through analysis of region-of-interest and importance ranking.

For its next trick, speech for each scene is processed by combining subtitle information with the audio track of the video. The audio is analyzed for emotion to determine the appropriate speech bubble type and size of the subtitle text. Frames are even analyzed to establish which person is speaking for proper placement of the bubbles. It can then create layouts of the keyframes, determining panel sizes for each page based on the region-of-interest analysis.

The process is completed by stylizing the keyframes with flat color through quantization, for that classic cel shading look, and then populating the layouts with each frame and word balloon.

The team conducted a study with 40 users, pitting their results against previous techniques which require more human intervention and still besting them in every measure. Like any great superhero, the team still sees room for improvement. In the future, they would like to improve the accuracy of keyframe selection and propose using a neural network to do so.

Thanks to [Qes] for the tip!

Post a Comment